Protecting Yourself From Deepfakes in 2026

Image created using advanced AI technology, professionally refined by MyPornBible editorial team.

Deepfake porn is exploding and the law is finally catching up. Here's every tool, legal weapon, and practical step you need to get non-consensual AI nudes removed and protect your identity online.

How Big Is the Deepfake Porn Problem?

Between 96% and 98% of all deepfake content online is non-consensual intimate imagery, and virtually all victims are women. The problem has grown from a niche concern to a full-blown epidemic that affects everyday people, not just celebrities.

Why Should Porn Consumers Care?

If you're browsing adult content regularly, deepfake protection is directly relevant to you. Someone only needs a single clear photo of your face to generate a realistic AI nude in under 25 minutes using free software. That image could end up on a tube site, a Telegram group, or used for sextortion.

The adult industry itself is actively fighting this problem. Legitimate platforms listed on directories like MyPornBible invest in content verification, age checks, and consent documentation. The sites that skip these steps are the ones where deepfakes thrive.

How Can You Tell If a Deepfake Exists of You?

Reverse image search is the fastest way to discover if someone has created non-consensual AI-generated content using your likeness. Google Images, TinEye, and specialized tools like PimEyes can scan billions of pages in seconds.

Reverse Image Search Methods

Upload a clear headshot to Google Images reverse search, TinEye, or Yandex Images. These tools crawl indexed websites and return pages where matching or visually similar images appear. PimEyes goes further by using facial recognition to find your face across the web, even in modified or AI-altered images.

Run searches periodically — not just once. New content gets indexed daily. Setting a monthly calendar reminder to check is a simple habit that can catch problems early before they spread.

Signs an Image or Video Is a Deepfake

Look for inconsistencies around hair edges, earlobes, and teeth. Deepfakes frequently struggle with asymmetric accessories like earrings or glasses. Skin texture may appear too smooth or waxy compared to the rest of the body.

In video deepfakes, watch for unnatural blinking patterns, flickering around the jawline during speech, and lighting mismatches between face and body. Humans can only identify high-quality video deepfakes about 24.5% of the time, so AI detection tools are essential for confirmation.

Studies show that just 0.1% of people can accurately identify all deepfakes in a controlled test. If something looks suspicious, don't rely on your own eyes — use a detection tool like Sensity AI, Microsoft Video Authenticator, or Intel FakeCatcher.

How Do You Remove AI-Generated Nudes?

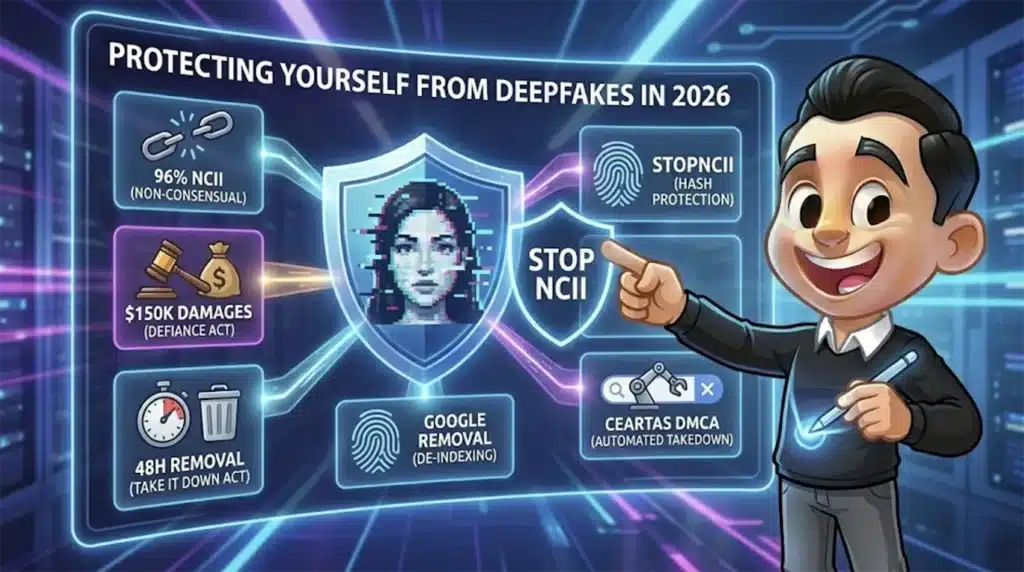

The TAKE IT DOWN Act, signed into law on May 19, 2025, requires online platforms to remove non-consensual intimate imagery within 48 hours of receiving a valid report. This is the most powerful removal tool currently available to deepfake victims in the United States.

DMCA Takedown Notices

If the deepfake uses your original photographs as source material, you likely hold the copyright to those images. Filing a DMCA takedown notice with the hosting platform and with Google directly forces them to remove the content or face legal liability.

Google provides a specific form for reporting non-consensual explicit images. Submitted requests typically result in the content being de-indexed from search results within days, cutting off the primary way people find such material.

StopNCII.org — Hash-Based Protection

StopNCII.org is a free tool operated by the Revenge Porn Helpline. It generates a unique digital fingerprint (hash) of an intimate image on your own device without uploading the actual photo. Participating platforms then match against this hash to proactively detect and block the image from being shared.

StopNCII now explicitly covers deepfakes. If a synthetic AI image exists of you and you have access to it, you can hash it and add it to their database. Facebook, Instagram, TikTok, Bumble, and other major platforms participate in this program.

Requesting Removal Under the TAKE IT DOWN Act

By May 2026, every major platform hosting user-generated content must have a formal notice-and-takedown process for non-consensual intimate imagery. The platform has 48 hours from receiving a valid notice to remove the content and must also make reasonable efforts to remove identical copies.

Violations are enforced by the FTC and carry criminal penalties including fines and up to two years in prison for adults, three years when minors are depicted. This law applies to both authentic revenge porn and AI-generated deepfakes.

| Removal Method | Cost | Speed | Best For |

|---|---|---|---|

| DMCA Takedown | Free (DIY) | 3–14 days | Content using your photos |

| StopNCII.org | Free | Ongoing / proactive | Preventing re-uploads |

| TAKE IT DOWN Act | Free | 48 hours | Platform-hosted NCII |

| Google Removal Request | Free | 1–7 days | De-indexing from search |

| Professional Service (e.g., Ceartas) | $99+/month | Automated / ongoing | Creators, public figures |

What Laws Protect Deepfake Victims?

The DEFIANCE Act, passed unanimously by the U.S. Senate in January 2026, establishes a federal civil right of action allowing victims of non-consensual deepfakes to sue creators and distributors for up to $150,000 in statutory damages — or $250,000 when linked to stalking, harassment, or sexual assault.

The DEFIANCE Act was directly prompted by the Grok AI chatbot controversy on X (formerly Twitter), where the chatbot generated sexualized deepfakes of real people, including minors. The Senate passed it unanimously after X failed to remove exploitative images even after public outcry.

State-Level Protections

As of early 2026, 32 U.S. states have passed laws addressing deepfakes or revenge porn. California expanded protections allowing victims of AI-generated porn to sue for damages. Virginia and Maryland updated revenge porn statutes to explicitly criminalize synthetic intimate images.

Outside the U.S., the UK Online Safety Act requires platforms to remove non-consensual intimate imagery including AI deepfakes once notified. Australia's Criminal Code Amendment makes creating or sharing realistic deepfake sexual material a federal crime carrying up to six years in prison. France amended its Penal Code to criminalize non-consensual sexual deepfakes with penalties up to two years and €60,000 in fines.

What the DEFIANCE Act Means for You

The DEFIANCE Act allows victims to sue anyone who knowingly creates, distributes, or possesses with intent to disclose sexually explicit deepfakes without consent. Attorney fees can be recovered, making it financially viable even for individuals without significant resources.

The law specifically targets platforms that show reckless disregard for non-consensual content. As Senator Durbin stated, companies like X that refuse to remove exploitative content can now be held civilly liable for damages. The adult sites recommended on MyPornBible take consent verification seriously — choosing verified platforms is your first line of defense.

How Do You Prevent Deepfakes of Yourself?

Limiting publicly available high-resolution photos of your face is the single most effective prevention strategy. Deepfake generators need clear facial images to work — fewer source images means lower quality fakes and harder creation.

Reduce Your Digital Footprint

Audit your social media profiles. Set Instagram, Facebook, and TikTok accounts to private. Remove or limit public-facing photo galleries where high-resolution face images are easily downloadable. Deepfake tools can work with a single clear photo, but multiple angles dramatically improve the result.

Consider using tools like Fawkes or Glaze developed by the University of Chicago. These apply imperceptible pixel-level alterations to your photos that confuse AI face recognition systems without changing how the image looks to human eyes.

Digital Watermarking

Invisible watermarking embeds ownership data directly into your image files. Services like Digimarc and Imatag add metadata that survives screenshots, resizing, and basic editing. If a deepfake is created from a watermarked source image, forensic analysis can trace it back and prove it was derived from your original.

Content Credentials (C2PA standard) is an emerging industry standard backed by Adobe, Microsoft, and the BBC. Photos taken with C2PA-enabled cameras or apps carry a verifiable chain of custody showing who created the image and when — making it easier to prove a deepfake is fraudulent.

Best Deepfake Protection Tools

StopNCII.org, Google's NCII removal form, and Ceartas DMCA are the three most effective tools for removing non-consensual intimate content from the internet in 2026. Each serves a different purpose in your protection strategy.

| Tool | Type | Cost | What It Does |

|---|---|---|---|

| StopNCII.org | Prevention | Free | Creates hash fingerprints of intimate images. Participating platforms auto-detect and block matches. |

| Google NCII Form | Removal | Free | De-indexes non-consensual images from Google Search results, cutting off discoverability. |

| Ceartas DMCA | Automated Removal | From $99/mo | AI-powered scanning and automated DMCA takedowns across the entire web, including Telegram. |

| NCMEC Take It Down | Minors Only | Free | Removes sexual images of minors (including if you were under 18 when the image was created). |

| PimEyes | Detection | Free + Paid | Facial recognition search engine that finds your face across indexed websites. |

| Cyber Civil Rights Initiative | Legal Help | Free | Crisis helpline, legal referrals, and guidance for image-based sexual abuse victims. |

If you create content on platforms like OnlyFans, Fansly, or cam sites (check our best cam sites guide for verified platforms), automated DMCA services like Ceartas are virtually essential. They continuously scan the web for leaked content and issue takedowns at scale — something impossible to do manually when you have thousands of images circulating.

Step-by-Step Removal Action Plan

If you discover a deepfake of yourself, act immediately. Faster reporting leads to faster removal and limits the spread of the content. Follow this step-by-step plan to address the situation methodically.

Step 1: Document Everything

Take screenshots of every page displaying the content, including URLs, timestamps, and any visible usernames. Save the metadata. This evidence is critical for legal proceedings under the DEFIANCE Act and for filing effective takedown requests.

Step 2: Report to the Platform

Use the platform's built-in reporting system to flag the content as non-consensual intimate imagery. Under the TAKE IT DOWN Act, the platform has 48 hours to remove it. If the platform has no reporting mechanism yet, they must implement one by May 2026.

Step 3: File a DMCA Takedown

Submit a DMCA takedown notice to the hosting provider and to Google. You can file with Google through their legal help center. Include your identification, the specific URLs, and a statement that the content was posted without your consent.

Step 4: Register With StopNCII

Hash the offending images through StopNCII.org to prevent them from being re-uploaded to participating platforms. This creates an ongoing shield even after the initial content is removed.

Step 5: Explore Legal Action

Contact the Cyber Civil Rights Initiative (CCRI) crisis helpline for free guidance. If the DEFIANCE Act passes the House, you may have a federal civil claim for up to $150,000. Many state laws also provide avenues for damages. An attorney specializing in image-based abuse can evaluate your options.

The adult industry respects consent — and so should every consumer. When browsing, stick to verified platforms. MyPornBible's curated directory only lists sites that follow basic content verification standards. If you spot suspected deepfake content on any platform, report it. You might be the reason someone gets their life back.

Key Takeaways

- Deepfakes are everyone's problem. Someone needs just one photo and 25 minutes to generate a fake nude of anyone. Over 96% of deepfake content online is non-consensual intimate imagery targeting women.

- The law is finally catching up. The TAKE IT DOWN Act (2025) forces platforms to remove NCII within 48 hours. The DEFIANCE Act (2026) lets victims sue for up to $250,000 in damages. Thirty-two states have passed additional protections.

- Free tools exist to fight back. StopNCII.org, Google's NCII removal form, and the Cyber Civil Rights Initiative all provide free support. Use reverse image search regularly to monitor your exposure.

- Prevention beats reaction. Limit public high-res face photos, set social media to private, use face-cloaking tools like Fawkes, and enable digital watermarking on content you publish.

- Support ethical platforms. Consuming content from verified, consent-respecting sites reduces demand for non-consensual material and makes the entire ecosystem safer for everyone.

For curated picks spanning every adult niche, dive into our guide to the best porn sites — always up to date with what's worth your time.